The debate over whether artificial intelligence can match or surpass human copywriters in creating effective ad copy has intensified as generative AI tools have become widely accessible. In 2026, marketers routinely use models like GPT variants, Gemini, or platform-native tools (such as Google’s Performance Max or Meta’s Advantage+) to generate headlines, descriptions, and calls to action at scale. Yet the core question remains: does AI-generated copy drive higher click-through rates (CTR) and conversion rates than copy crafted by experienced humans?

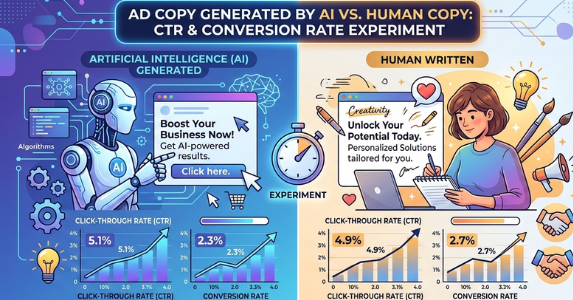

This blog post examines real-world experiments, case studies, and performance data from 2024 through early 2026. The evidence shows a nuanced picture. AI often excels at generating attention-grabbing variations quickly and can achieve comparable or slightly higher CTR in certain contexts, particularly for broad awareness or high-volume testing. However, human-written copy consistently outperforms in conversion rates, especially when emotional nuance, psychological triggers, brand authenticity, and message-to-landing-page alignment matter most. The optimal approach in 2026 appears to be hybrid: leverage AI for volume and iteration, with human oversight for refinement and strategy.

Why This Comparison Matters in 2026

Paid advertising platforms now encourage massive creative testing. Google Responsive Search Ads (RSAs) and Meta’s dynamic creatives allow dozens of headline and description combinations, where AI can produce hundreds of variants in minutes. Human copywriters, by contrast, focus on fewer but more targeted pieces grounded in audience research, pain points, and storytelling.

Key metrics in these experiments include:

- CTR: Percentage of impressions that result in clicks (measures initial appeal).

- Conversion rate: Percentage of clicks that complete a desired action (purchase, sign-up, lead form) (measures persuasion and relevance).

- Related indicators: Cost per acquisition (CPA), conversion value, and revenue per impression.

Studies reveal trade-offs. AI tends to produce “safe” but generic copy that performs well on surface-level engagement. Human copy injects specificity, urgency, social proof nuances, and emotional resonance that convert better downstream.

Key Experiments and Case Studies

Several independent tests and platform analyses provide concrete data.

- Google Ads Responsive Search Ads Analysis (Groas.ai, 2025, 50+ campaigns) Human-written RSAs generated 214% more conversions on average than purely AI-generated versions, despite receiving fewer impressions. Human ads showed 462% higher conversion rates from clicks, 360% higher conversion value, 423% more phone calls, and 234% more form submissions. AI versions had lower CPC in some cases but higher overall CPA due to poorer traffic quality. The gap stemmed from human ability to layer psychological triggers (authority vs. mere social proof) that AI often genericized.

- Taboola/Columbia University Study (2026, hundreds of thousands of ads, 500M+ impressions) A large-scale academic collaboration (Columbia, Harvard, TUM, Carnegie Mellon) used a “sibling ads” method to compare matched AI-generated and human-made ads from the same campaigns. Raw data showed AI ads with a slightly higher CTR (0.76% vs. 0.65% for human). After tight statistical controls, performance was comparable. Crucially, downstream conversions held steady or improved with AI visuals/copy when not perceived as artificial. This suggests AI excels in click attraction without sacrificing quality when prompts are strong and outputs refined.

- Search Engine Journal Google Ads Test (2024, updated context in later analyses) Human-written RSAs achieved 4.98% CTR vs. 3.65% for AI-generated (using Copy.ai). Humans saw 60% more clicks, 45% more impressions, and lower CPC ($4.85 vs. $6.05). While dated, similar patterns appear in 2025 replications where human nuance wins on relevance.

- LinkedIn/Multi-Channel Comparative Study (Anant Goel, ~2025, 2,000+ campaigns) Human copy averaged 4.5% CTR and 15.3% engagement rate vs. 3.8% CTR and 12.7% for AI. Conversion rates followed: 3.1% human vs. 2.5% AI. Humans edged out in persuasion depth.

- Mana Communications A/B Tests (2025 summaries) In headline tests, AI versions gained 11% higher CTR, but human headlines delivered 17% higher conversion rates. Email variants showed AI with 5% higher opens but humans generating 22% more revenue per send due to storytelling.

- Meta/Facebook Ad Experiments (Various 2025 cases, e.g., Neil Patel, KOSE) Results vary by setup. In one test, human creatives outperformed AI 68% of the time on conversions. In Meta Advantage+ scenarios, AI copy achieved comparable CTR (1.07% vs. 1.08%) but lower cost-per-link-click (15% reduction) and CPM. Hybrid modifications (AI variants from human base) often lifted performance.

Additional aggregates (Amra & Elma 2025 stats compilation) show hybrids (human-edited AI) gaining +26% CTR in some Facebook tests, while pure AI sometimes hits +19% CTR in simple formats but lags in complex funnels.

What the Data Reveals: Patterns Across Studies

- CTR Advantage: Mixed. AI frequently wins or ties on raw clicks (e.g., Taboola 0.76% vs. 0.65%, some Pencil/Zebracat +19-38% lifts) because it generates punchy, optimized hooks at scale. Humans win when specificity and differentiation are needed (e.g., SEJ 4.98% vs. 3.65%).

- Conversion Rate Advantage: Strongly favors humans (214% in Groas, 17-24% in others). AI struggles with emotional depth, objection handling, and precise alignment to user intent.

- Cost Efficiency: AI lowers production costs and enables rapid testing, reducing effective CPA in high-volume scenarios. Humans deliver better ROI on qualified traffic.

- Hybrid Wins: Most consistent performer. AI for ideation/variants + human editing for voice, psychology, and testing yields 15-42% lifts in ROI across reports.

Why Humans Still Hold the Edge on Conversions

AI copy often lacks:

- Subtle psychological layering (e.g., authority signals tailored to buyer stage).

- Authentic storytelling or cultural fluency.

- Brand-specific voice that builds trust.

- Avoidance of “slop” (generic phrases that feel robotic).

Humans excel at empathy-driven persuasion, which matters more post-click.

Practical Recommendations for Marketers in 2026

- Use AI for volume: Generate 50-100 variants per campaign to feed platforms.

- Apply human refinement: Edit top AI outputs for emotion, specificity, and alignment.

- Run structured A/B tests: Split budgets between pure AI, pure human, and hybrid. Track to statistical significance.

- Focus on funnel stage: AI for top-of-funnel awareness; humans for bottom-funnel conversion.

- Monitor perception: Label or avoid overt AI signals if it hurts trust.

- Iterate frequently: Refresh copy every 4-6 weeks as audiences adapt.

Final Thoughts

No single winner exists in the AI vs. human ad copy debate; context determines the outcome. For rapid scaling and initial engagement, AI delivers speed and competitive CTR. For meaningful business results—higher conversions, lower CPA, greater revenue—human insight remains superior in most paid advertising scenarios. The most effective strategy combines both: AI as a powerful accelerator, humans as the strategic driver. Marketers who master this partnership will outperform those relying on one side alone. Test rigorously in your own accounts, because platform algorithms, audiences, and creative quality evolve quickly. The data from 2026 points clearly toward collaboration over competition.